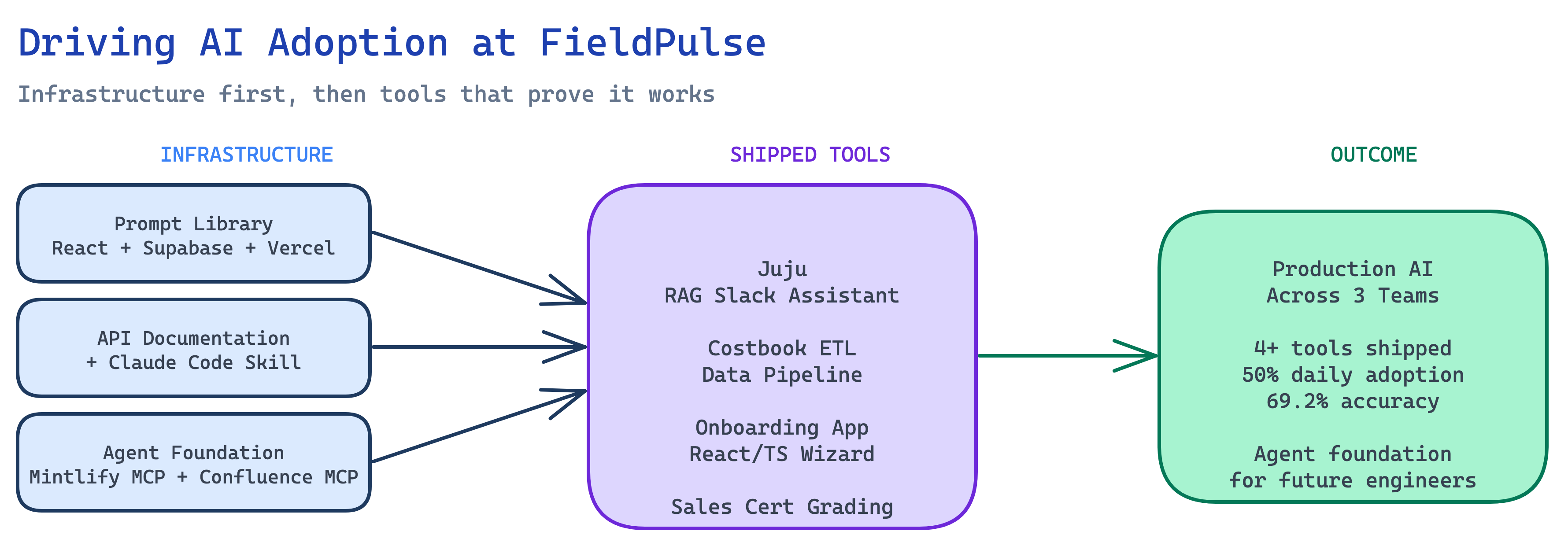

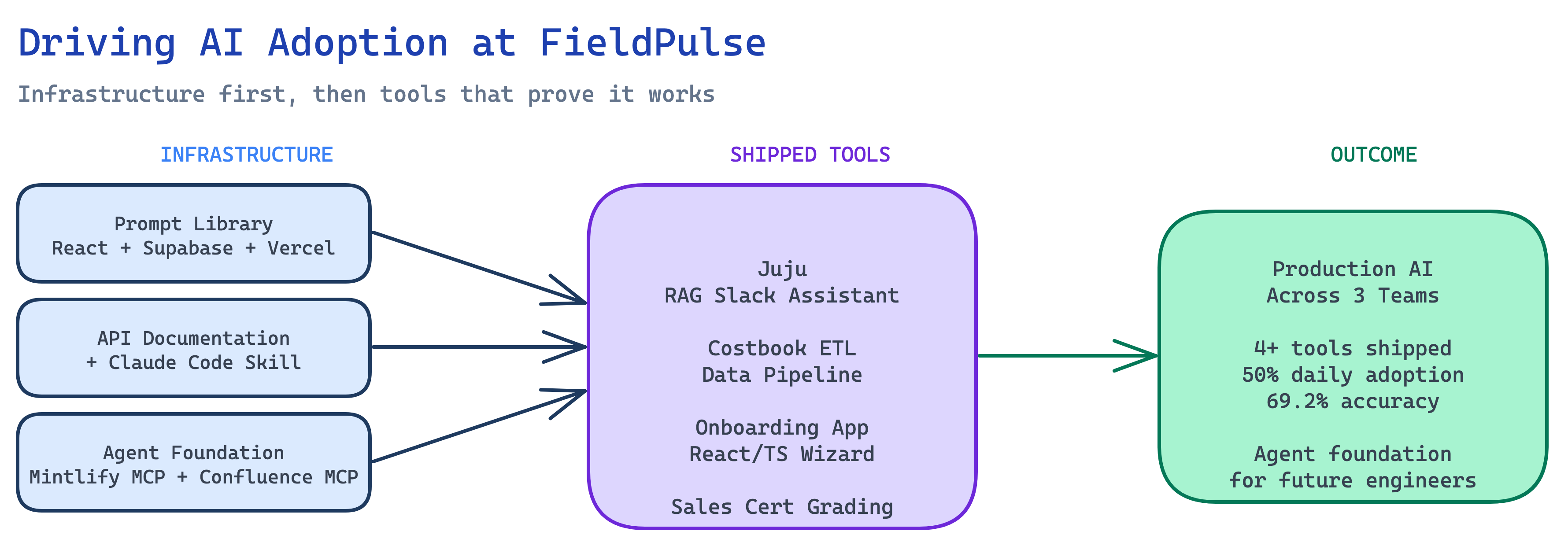

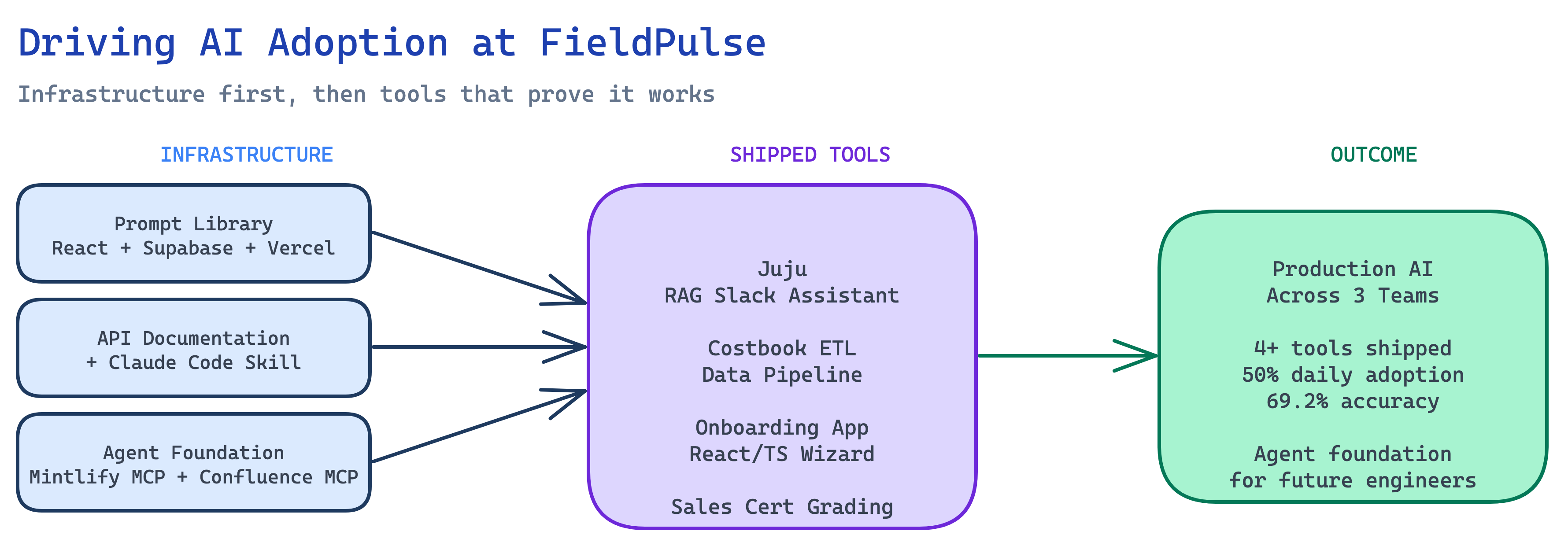

A project about projects. Building the conditions under which AI adoption compounds — not just individual tools, but organizational capability.

Context

When I joined FieldPulse in October 2025, the company had genuine interest in AI but no shared foundation. Individual people were using ChatGPT for their own workflows, there were exploratory conversations about what AI could do for the product, but no prompt standards, no internal tools, and no knowledge of what had been built or what worked. Every person's AI usage was a private experiment. The job I took on — formally and informally — was to change that.

Problem

The challenge wasn't convincing people that AI was useful. The challenge was structural:

No shared knowledge

Prompts that worked well lived in one person's browser history. When they left a conversation, the knowledge left with it.

No documented internal context

AI tools are only as useful as the context you give them. FieldPulse's APIs, data models, and workflows weren't documented in a form AI agents could use.

No onboarding path for AI tooling

New engineers had no established way to use AI agents effectively in the FieldPulse context specifically — not AI in general, but AI applied to FieldPulse's particular systems.

No production patterns

The gap between "interesting demo" and "tool that ships and gets used" is enormous. Closing it requires repeatable patterns, not individual heroics.

Approach

The strategy was three parallel workstreams — a shared prompt repository so institutional knowledge persists, structured API documentation so agents have real context, and a reference architecture so the next engineer to build an AI tool doesn't start from scratch. The hero projects in this portfolio are the proof layer: each one is a production system that runs on this infrastructure.

Prompt Library

Shared, searchable repository organized by team and use case.

API Documentation + Claude Code Skill

Structured docs wrapped into a reusable context block for agent sessions.

Agent Foundation

Reference architecture with MCP connections so new agents start from a working foundation.

What Was Built

Each layer solves a different adoption bottleneck. Together, they form the foundation that every production AI tool at FieldPulse builds on.

A React + Vite + Tailwind + Supabase internal web app deployed on Vercel. Prompts organized by team (engineering, implementation, sales, support) with tagging, search, and individual prompt URLs. The admin dashboard gives visibility into what exists and where gaps are. Every prompt has a copy button and a permalink — so "what prompt do you use for X" has a link as an answer, not a paste out of Slack. Built using the Compound Engineering methodology: structured feedback cycles with stakeholders, each round producing a defined change set executed as a discrete Claude Code session.

The engineering team's AI usage was limited by the absence of structured internal context. An AI agent that doesn't know FieldPulse's API surface is a general-purpose tool. One that does is a FieldPulse-specific accelerator. The documentation initiative captured internal API endpoints, request/response shapes, authentication patterns, and common workflow sequences in structured markdown — then wrapped them into a Claude Code skill that gives agents FieldPulse-specific knowledge immediately on session start, rather than requiring extensive re-explanation every time.

The infrastructure piece most likely to compound over time. A reference architecture with established MCP connections: Mintlify MCP for help center docs (any agent can query them directly without a custom RAG pipeline), Confluence MCP for internal wiki and process documentation, and established patterns for how agents authenticate and call FieldPulse's internal endpoints. When the next engineer wants to build an AI agent for an internal use case, they start from a working set of connections, documented patterns, and reference examples — not from scratch.

Outcome

4+

Tools shipped to production

~50%

Daily adoption (Juju)

69.2%

Accuracy post-eval

3

Teams using AI daily

FieldPulse went from individual AI experiments with no shared infrastructure to a set of production tools, a documented agent foundation, and an onboarding path for AI tooling. The work is not finished — it's in a state where it can compound.

Each of these is a standalone case study. Together, they're the evidence base for this initiative.

Juju: RAG in Production →

The flagship internal AI tool. Five months in production, eval pipeline, now being re-architected on the MCP foundation built here.

Costbook ETL Pipeline →

Bronze/Silver/Gold data pipeline turning HVAC supplier price books into importable Costbook data. Most technically complex project in the portfolio.

Customer Onboarding App →

Full product cycle from PRD through 15-module React/TS wizard through Salesforce integration through deployment. End-to-end product ownership.